ICT and the Early Grade Reading Assessment: From Testing to Teaching

The science of early literacy acquisition and proven techniques for teaching reading are both backed by years of experimental research, as well as practical experience implementing programs to improve reading.

Experts agree that measuring reading progress early offers the benefits of informing remediation, taking a snapshot in time or showing progress over time of children’s reading abilities and informing stakeholders and policy makers about what programs or methods work.

Frequent diagnostic testing at national or classroom levels can serve to establish benchmarks; and monitoring progress against these benchmarks can be a key factor in motivating schools, teachers, students, and families (Davidson, Korda, & Collins, 2011).

The Education for All Fast Track Initiative recently set two indicators related to reading skills:

- Proportion of students who, after two years of schooling, demonstrate sufficient reading fluency and comprehension to “read to learn”

- Proportion of students who are able to read with comprehension, according to their countries’ curricular goals, by the end of primary school

These indicators are considered an effective measure of a school system’s overall health as well as a specific diagnosis of reading performance that can inform policy and implementation of curriculum and teacher training, among other things. According to Gove and Wetterberg (2011),

“The Early Grade Reading Assessment (EGRA) is one tool used to measure students’ progress toward learning to read. It is a test that is administered orally, one student at a time. In about 15 minutes, it examines a student’s ability to perform fundamental prereading and reading skills” (p. 2).

Over the past five years, we at RTI International, various donors, and experts in the field of early reading have worked to “develop, pilot, and implement EGRA in more than 50 countries and 70 languages” (p. 2). Assessments like EGRA help teachers focus on results, by describing what children know or do not know, and where instruction must focus in order to change that. For example, in Egypt, the first Arabic EGRA survey showed very clearly that children who knew letter sounds performed better on reading a short passage than children who only knew letter names; yet 50% of children tested could not identify a single letter sound. These findings signaled that a fundamental shift in instructional methods was required, and after schools adopted a phonics-based approach using letter sounds, performance increased nearly 200% over baseline one year later (Cvelich, 2011).

That said, to measure for results, teachers and their supervisors must find the tools accessible and easy to use to inform their own instruction. It also helps if the results underpin communication with parents and communities, as well as national politicians. (Crouch, 2011). Too often, results from national standardized tests remain at the national level, with teachers rarely getting feedback on performance, much less feedback that is more specific than classroom averages. Furthermore, it can sometimes be months, if not years, before the results of large national assessments are made available, at which time it is too late to change instructional practices – at least for that set of children.

How can ICT play a role?

Systematic use of mobile devices to assess early literacy and numeracy, especially in developing countries, remains limited to date. Reasons include:

- Initial procurement cost of the devices and the necessity for specific training in their use;

- Lack of robust cost-benefit analyses to inform sustainability of this type of approach; and

- Limitations in local capacity to develop or manipulate the necessary data collection software.

As we state elsewhere (Pouezevara & Strigel, 2011), there are several ways in which information and communication technologies (ICT) may be applied to the assessment process to make implementation and use of the results more accessible:

- Creating or tailoring tests

- Training data collectors

- Collecting actual field data

- Manipulating and managing the data to extract and present the most significant findings.

Among these, the greatest added value is in using electronic devices for data collection and rapid analysis in place of paper-based assessments.

- Electronic devices can reduce the amount of paper needed, as well as the associated costs. Expenses dispensed with include the actual purchase of paper, clipboards, pencils, timers and so on, as well as the labor involved in the lengthy processes of checking student sheets for copy quality, stapling individual packets, counting instruments out by team and school in advance of data collection in the field, and distributing the packets. Paper-related costs such as printing, supplies, data entry, and data cleaning can make up 5%–15% of the entire budget of an EGRA implementation, according to an RTI internal review.

- Collecting data digitally means that it can move directly from a device into a database for analysis. This has several benefits in terms of efficiency: less time for data entry, lower data-entry costs, and less time to report out results. Quicker access can encourage stakeholders to do such assessments even when they need data rapidly to make important decisions based on results.

- Electronic means have the potential to reduce the number of points for human error in moving from paper to database to analysis software. As with most sophisticated survey software, programmers can build in checks or stops to help assessors recognize data-entry errors immediately, at the time of administration.

- Electronic media can be less physically challenging than dealing with paper-related administration: “An electronic solution may also reduce measurement errors arising from problems in handling the timers and other testing materials. Difficulties include forgetting to start the timer, setting the wrong amount of time on the timer, or leaving student prompt sheets with the student when they should have been taken away” (Pouezevara & Strigel, 2011, p. 188).

What solutions are available?

In theory, there are many potential ways to transform paper assessments into an electronic equivalent, but a custom solution is required because of differences between oral reading assessments like EGRA and other standard surveys. For example, data have to be entered at the child’s pace on the subtasks, not that of the assessor. Therefore, survey data collection applications on the market for phones, PDAs, or portable computers typically are not appropriate.

After investigating a wide range of potential hardware and software platforms, we developed Tangerine™, a digital assessment interface for touch-screen tablet computers running the Android operating system (see photographs). It can be used for the standard EGRA approach, or customized for other types of surveys such as early math diagnostics or school information surveys.

Other organizations are also exploring a variety of solutions. Prodigy Systems, an organization that has partnered with RTI in Yemen, successfully developed iProSurveyor for use with Arabic assessments on the iPad. Its first large-scale implementation in Yemen in early 2011 confirmed many of the benefits of the digital approach.

- The database output was easily readable by any data analysis program, avoiding time-consuming manual data transcription and recoding before statistical analysis.

- Administration errors, such as forgetting to start the timer or enter a response, were minimized through built-in error control.

- Significantly fewer materials had to be transported in challenging terrain and an environment unfavorable to printed materials.

- No issues arose linked to poor printing quality or stapling.

- Total administration time was quicker relative to paper assessment (comparison conducted over one assessment administrator).

Cost-Benefit Analysis

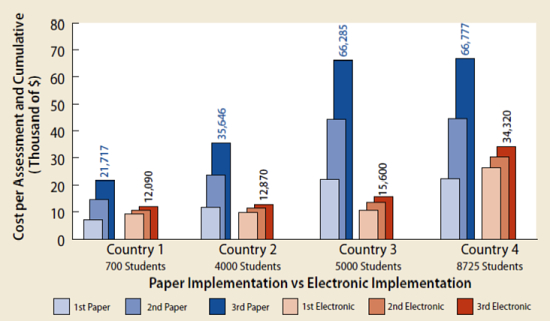

At RTI we recently conducted a preliminary cost-benefit analysis using approximate costs from recent EGRA implementations in four different African countries. The analysis aimed to identify the point of cost recovery at which the digital approach would actually yield cost savings. We modeled not one, but three data collection rounds for each country, because it is common to repeat assessments – e.g., for program baseline, midterm, and post-intervention evaluation, or annual monitoring of student outcomes.

In our cost calculation for the digital approach, we assumed hardware costs of USD300/enumerator plus a 10% contingency for spares and accessories, such as a wireless access point for field-based data back-up for the first data collection (e.g., baseline). For the cost of a second digital data collection, we assumed re-use of the tablets from the first data collection, but factored in a 15% contingency just in case replacements are needed.

To calculate the cost of a second paper-based data collection we multiplied the paper-related costs by two, as the same costs for printing, data entry, and data cleaning would incur again. We followed the same process for adding a third data collection to the calculation (assuming baseline, mid-term, and post-intervention assessments).

As shown in Exhibit 1, for most small-sample data collections or one-time assessments, the cost of the hardware may not be offset by the eliminated paper-related costs. The return on investment in repeated implementations, however, is clear in terms of cumulative costs.

Exhibit 1: Cost of EGRA implementation, paper vs. electronic, for three administrations

In addition to making large national assessments more efficient, the same devices can be adapted for use as classroom-based continuous assessment tools, or as data entry interfaces for situations that still require paper-based tests. With such devices in their hands, teachers or school supervisors can do regular mastery checks more frequently, and capture the results at student and classroom levels.

The resulting data set is a rich one, and if it is supported by built-in computer-based analytics, it can be analyzed in multiple ways to indicate not only whether the methods in place are improving reading ability, but also what areas of the curriculum need more attention, and which children or groups of children are falling behind. For example, detailed item analysis at the classroom or individual level might show a recurring problem with vowel sounds, or decoding. This subsequently provides clear instructional recommendations to focus on.

Limitations and pitfalls

However, electronic administration is not necessarily a cure-all:

Obviously, using electronic data collection at either national or classroom levels does not solve all the limitations of print-based testing; indeed, doing so might introduce new challenges. For example, although a digital solution would eliminate the risk of environmental damage to paper forms during difficult transport situations, it might pose a great risk that all assessment data could be lost at once through loss, damage, or theft of a single device, if proper backup procedures were not in place. Likewise, handling of the new device might prove to be more challenging than handling the timer and all associated materials. […] Thus, strong electronic quality control and supportive supervision during data collection would be crucial. (Pouezevara & Strigel, 2011, p. 188)

Furthermore, the EGRA approach is intended to be a simple solution that can be adopted by countries with minimum technical assistance. An electronic solution should be flexible enough that it does not create dependency of users on software programmers or hardware technicians to change test items and configuration as needed.

In terms of costs, clearly, initial investment costs for specialized hardware may be prohibitive in some situations, but our preliminary cost-benefit analysis indicated that over time the investment will pay off if used for multiple large-scale implementations. Additionally, implementers can leverage the initial investment by choosing tools that can be used for other purposes when not in use for assessment—for example, by loading tablet computers with other instructional materials, training resources, or literacy materials.

We can also foresee assessment software being linked not only to automatically generated analysis of results, but also to suggested instructional resources tailored to those results and a record of day-to-day time on task. It is also possible, using the same technologies that power Tangerine™, to adapt the assessment methodology to more common and less expensive handheld devices, such as mobile phones. These smaller devices might be particularly useful for the most rapid types of literacy assessments, such as Pratham’s yearly literacy and numeracy surveys, which involve fewer subtasks than EGRA and fewer items per test.

Another potential pitfall related to making national or continuous assessments more readily accessible is that they could be used for excessive assessment, and focus on “teaching to the test” at the expense of other higher order or student-centered activities. Too much focus on averages or aggregated results can draw attention away from the achievement of specific subgroups. Additionally, care must be taken that classroom-level results are not misused by aggregating small samples and reporting them up to the national level or attempting to generalize them.

This is a rapidly evolving field, with new technologies arriving on the market almost daily, and prices falling significantly, so it is expected that it will become increasingly feasible to implement electronic methods for literacy assessments in developing countries. Meanwhile, we are piloting various solutions and collaborating with other institutions that have similar goals. Further interest and ideas from the international development community are welcome.

References

Crouch, L. (2011). Motivating early grade instruction and learning: Institutional issues. Ch. 7 in A. Gove & A. Wetterberg, The Early Grade Reading Assessment: Applications and interventions to improve basic literacy (pp. 227–250). Research Triangle Park, NC: RTI Press. Available from http://www.rti.org/pubs/bk-0007-1109-wetterberg.pdf

Cvelich, P. (2011, September/October). Egypt shakes up the classroom. Frontlines. Washington, DC: United States Agency for International Development (USAID). Available from http://www.usaid.gov/press/frontlines/fl_sep11/FL_sep11_EDU_EGYPT.html

Davidson, M., Korda, M., & White Collins, O. (2011). Teachers’ use of EGRA for continuous assessment: The case of EGRA Plus: Liberia. Ch. 4 in A. Gove & A. Wetterberg, The Early Grade Reading Assessment: Applications and interventions to improve basic literacy (pp. 113–138). Research Triangle Park, NC: RTI Press. Available from http://www.rti.org/pubs/bk-0007-1109-wetterberg.pdf

Gove, A., & Wetterberg, A. (2011). The Early Grade Reading Assessment: An introduction. Ch. 1 in A. Gove & A. Wetterberg, The Early Grade Reading Assessment: Applications and interventions to improve basic literacy (pp. 1–38). Research Triangle Park, NC: RTI Press. Available from http://www.rti.org/pubs/bk-0007-1109-wetterberg.pdf

Pouezevara, S., & Strigel, C. (2011). Using information and communication technologies to support EGRA. Ch. 6 in A. Gove & A. Wetterberg, The Early Grade Reading Assessment: Applications and interventions to improve basic literacy (pp. 183–226). Research Triangle Park, NC: RTI Press. Available from http://www.rti.org/pubs/bk-0007-1109-wetterberg.pdf

I am not debating the logic and the analysis here. But the motive. If our perspective of quality of a product is different, the cost based evaluation/comparison does not work or, maybe irrelevant. Consider the digital media versus print – when the supply is excessive, we tend to confuse the quality with size, availability and open free access. (You would agree there are execptions). If you want to challenge, take pen and paper, take all your time and write a sentence, one may feel frustrated, but it had simulated one’s ‘self’ to think. Versus, one can type anything just like that, because inherently that can be deleted, retyped. The psycology here is anything erasable is of less value.

What I want to say is, that the cost based evaluation is seeing things in reverse. Call it behavioral economics, but when one spends more for the same service or product, he/she is considered ‘rich’, and feels rich, and hence the one is happy.

Carmen, you speak here of using ICT tools to assess reading skills, which is a great use of ICT. Do you have any ideas on tools that would increase the reading skills and abilities of students? Tools that could be used by teachers or the learners themselves to increase their reading skills and test scores?Or is the best approach a motivated and skilled teacher who can use diagnostic ICT tools to improve their non-ICT reading teaching activities?

I hope M4Read developed by iLearn4Free can be a possible digital/mobile tool to increase reading skills, but it would be great to use EGRA to compare Kids who are learning only with the traditional method, to kids using m4read and see what benefit we get.

Conducting this study in the US would make no sense because every child has access to digital learning (PBS, Hooked on Phonics….) but in other languages or in english speaking country with no access to technology that would be great.

If anyone knows a phd student who needs a good topic, please contact me.

I am currently working with MaryFaith Mount-Cors at VIF International Education. In 2009, she conducted her dissertation research in collaboration with RTI, using EGRA data to investigate the influence of family literacy practices (particularly mothers) on the Kenyan coast. I am curious about how this data would be shared between teachers and families, particulary since the home environment is an essential component of the program.

Wayan – either has value and much depends, as so often, on the context. There are certainly ICT tools that can support reading acquisition, especially in the early years. The affordances of ICT map well here with the cognitive level of the learning objectives – that is where reading skills focus on decoding or phonics for example and aim to promote automaticity. ICT here can provide excellent assisstive support through rehearsal, drill and practice in game form. My 3 year old is engaged with letters, words and text beyond her time in pre-school (and what mommy can muster after her work day) thanks to many child-appropriate apps that help her foster her knowledge of letter sounds and names and identification of sight words. There is also plenty of evidence that indicates that ICT can help students strengthen reading and listening comprehension skills. As a more recent meta-study, however, indicates yet again, the role ICT plays in the context of the classroom teaching and assessment practices, other materials, the home, etc., is critical to its potential contribution to enhanced learning outcomes. And having said this, we have not even examined yet the many additional contextual variables that we are faced with in many of the countries we work, including IT infrastructure and issues of medium of instruction versus mother tongue. I would like to hear what others think….